WHIS: Hearing Support Ecosystem

A comprehensive platform integrating Speech-to-Text and Sign Language Dictionary for inclusive classrooms.

Applying computer vision and web technologies to solve real-world problems. Focused on creating explainable AI for medical diagnosis and assistive tools for education.

Click on a project to read the full case study

A comprehensive platform integrating Speech-to-Text and Sign Language Dictionary for inclusive classrooms.

Explainable AI (XAI) model detecting 11 retinal diseases with Grad-CAM visualization for doctors.

Digitalizing student health records with BMI tracking and growth analysis for early intervention.

Education, experience, and milestones

Completed two modules with merit. Strengthened foundations in deep learning, machine learning, and computer vision, then applied GenAI API programming to build practical AI applications.

Led the development of an AI integrated learning platform for hearing impaired students. Focused on accessibility, teacher support, and real classroom impact in Ho Chi Minh City.

Built core features as a full stack project. Focused on clean database structure, stable modules, and a smooth user flow for real use.

Developed an AI driven vision screening and analysis system. Worked across training, evaluation, and explainability to deliver results that are accurate and trustworthy.

Supported a project led by my older brother by collecting and organizing a structured database. Learned collaborative workflow and teamwork practices with university student mentors.

Major reports and research outputs

Context & Problem: In Ho Chi Minh City, many deaf and hard-of-hearing students face barriers in learning and social integration due to limited visual learning materials and insufficient assistive technology. In practice, devices such as cochlear implants and hearing aids can be costly, while existing learning platforms largely provide general materials that are not aligned with Vietnam’s General Education Program (GDPT) for hearing-impaired learners. This gap is especially visible in English and general subjects (e.g., History, Geography), where students need sign-supported visuals, interaction, and personalization.

Prior to development, the project analyzed real classroom constraints and user needs, focusing on three themes: (1) economics & assistive devices, (2) curriculum & personalization, and (3) social inclusion. Findings emphasized the importance of visual materials and modern technology, and highlighted barriers such as communication difficulties and lack of sign-aligned learning resources.

WHIS is a multimedia online learning platform that integrates: Vietnamese Sign Language support, interactive lessons, gamified exercises, and an AI layer for sign recognition—designed to improve learning accessibility, communication, and self-study capacity for hearing-impaired students.

WHIS follows a three-layer architecture: (1) Front-end for the web UI (HTML/CSS/JavaScript), (2) Back-end for application logic and APIs (Node.js + Express) with MongoDB for user data, learning progress, and recognition results, and (3) AI Processing Layer (Flask API) deploying MobileNetV2 for efficient sign recognition.

The platform was implemented using a Spiral development model combined with Agile iterations. Modules were developed in short sprints (about 1–2 weeks) with continuous testing and refinement based on user feedback.

The AI component was trained on a Vietnamese Sign Language dataset of 39,000 samples (Vietnamese alphabet + digits 0–9), with 1,500 images per class. Data collection involved deaf students (Hy Vong School, District 6), teachers, domain experts, and volunteers. A custom Python tool was built to capture video frames and upload data automatically. Data split: 80/10/10 (train/val/test). Preprocessing included MediaPipe keypoints + resizing to 224×224 and augmentation (e.g., sharpening with Gaussian Blur and histogram equalization).

Training setup: fine-tuned MobileNetV2 with ImageNet weights using Adam (lr = 0.0001), 50 epochs, batch size 32, and categorical cross-entropy loss. Results: 98.57% test accuracy, 0.985 average F1, ~95 ms inference time, and a lightweight model size (~12 MB) suitable for resource-limited devices.

A 4-week experiment was conducted at Hy Vong and Anh Duong School for the Deaf (HCMC) with 29 students, 7 teachers, and 18 parents. In phase 1, teachers guided in-class usage; in phase 2, students used WHIS at home with parent support to assess self-learning. The team evaluated the system using a questionnaire with 12 criteria on a 5-level Likert scale, plus semi-structured interviews.

Quantitative results: mean scores ranged from 3.72 to 4.24. Highest-rated items included “Learn English” (4.24) and “Activities/Games” (4.21). “Ease of Use” (3.72) and “Performance” (3.76) were satisfactory but highlighted as areas for UX/performance refinement. Overall attractiveness and interface design received positive ratings, and reuse intention showed a positive trend.

WHIS demonstrates strong potential for improving inclusive learning through sign-supported multimedia lessons, interactive activities, and AI-assisted practice—while remaining cost-effective and scalable for real school contexts.

Context & Problem: Over 2.2 billion people worldwide live with vision impairment, and a large portion of cases can be prevented with early detection. Fundus photography is affordable and non-invasive, but manual diagnosis is time-consuming and limited by the shortage of eye specialists - especially in underserved areas. Most existing AI tools focus on a single disease, which is less practical for real-world clinical screening.

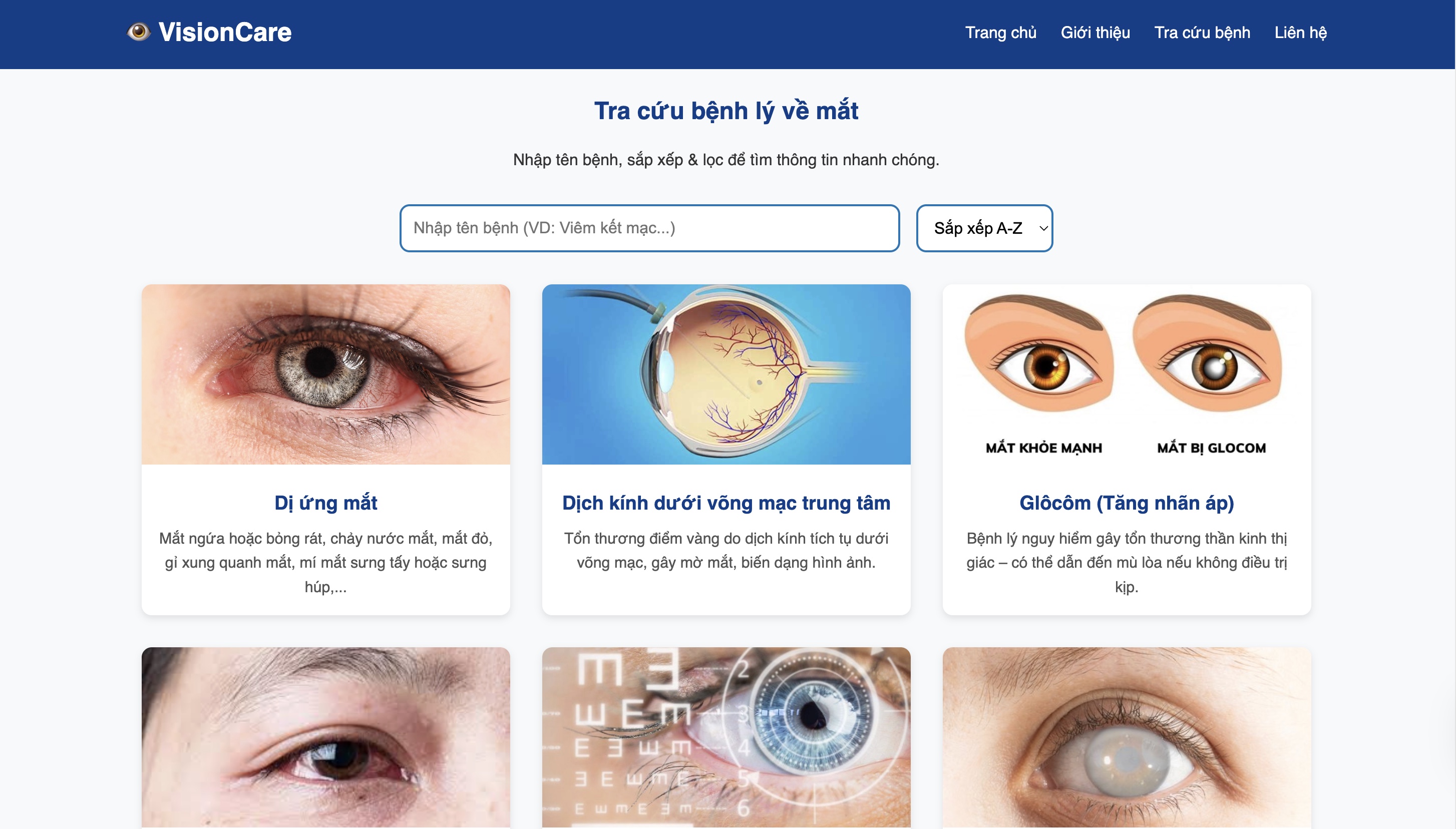

What We Built: VisionCare is a comprehensive web platform that combines: (1) multi-disease retinal AI diagnosis (classification + vessel/macula-related segmentation), (2) an eye-disease knowledge library for lookup, and (3) an EyeVision medical chatbot for fast Q&A and guidance.

Core AI Modules:

A) Multi-Disease Classification (11 classes):

Cataract, Central Serous Chorioretinopathy, Diabetic Retinopathy, Disc Edema, Glaucoma, Normal,

Macular Scar, Myopia, Pterygium, Retinal Detachment, Retinitis Pigmentosa.

Users can run one model or compare multiple models for more objective decisions.

Model Options (ODIR-5K + merged public retinal datasets):

- EfficientNetV2_rw_s - 95.3%

- DenseNet121 - 93.9%

- EfficientNet-B4 - 92.7%

- SwinV2_base_window8_256 - 91.8%

B) Vessel Segmentation: U-Net with a ResNet-50 encoder (ImageNet-pretrained) produces a binary mask to visualize vessel structure and affected regions. The system also reports quantitative coverage (pixel count / ratio) to support follow-up tracking.

C) Explainable AI (Trust Layer): VisionCare integrates Grad-CAM, Grad-CAM++, and LIME to highlight the image regions that drive predictions (heatmap overlay + important superpixels), making results easier for doctors and patients to verify.

D) Severity Scoring (Diabetic Retinopathy): Beyond detection, the system estimates severity levels (None -> Mild -> Moderate -> Severe -> Proliferative) using a multi-task design (classification + regression) with Focal Loss and Continuous Kappa Loss.

E) Records, Reporting, and Data Collection: For the doctor workflow, VisionCare stores patient metadata and AI outputs (labels, confidence, ICD-10, heatmaps, masks, severity score) into SQLite and generates a medical-style PDF report via FPDF. An optional "save for training" feature supports a human-in-the-loop improvement loop.

Performance & Real-World Impact: The system achieves high accuracy (typically 93-96%) with fast inference (often < 8 seconds). In a pilot at a local eye clinic (Hoc Mon), VisionCare screened 100+ fundus images and reduced average diagnostic time from about 15 minutes to 4-5 minutes per case while improving early detection throughput.

Why It Matters: VisionCare is designed for practical deployment as a lightweight web app - supporting community clinics, district/provincial hospitals, and tele-ophthalmology workflows - so more people can access early screening and timely referrals.

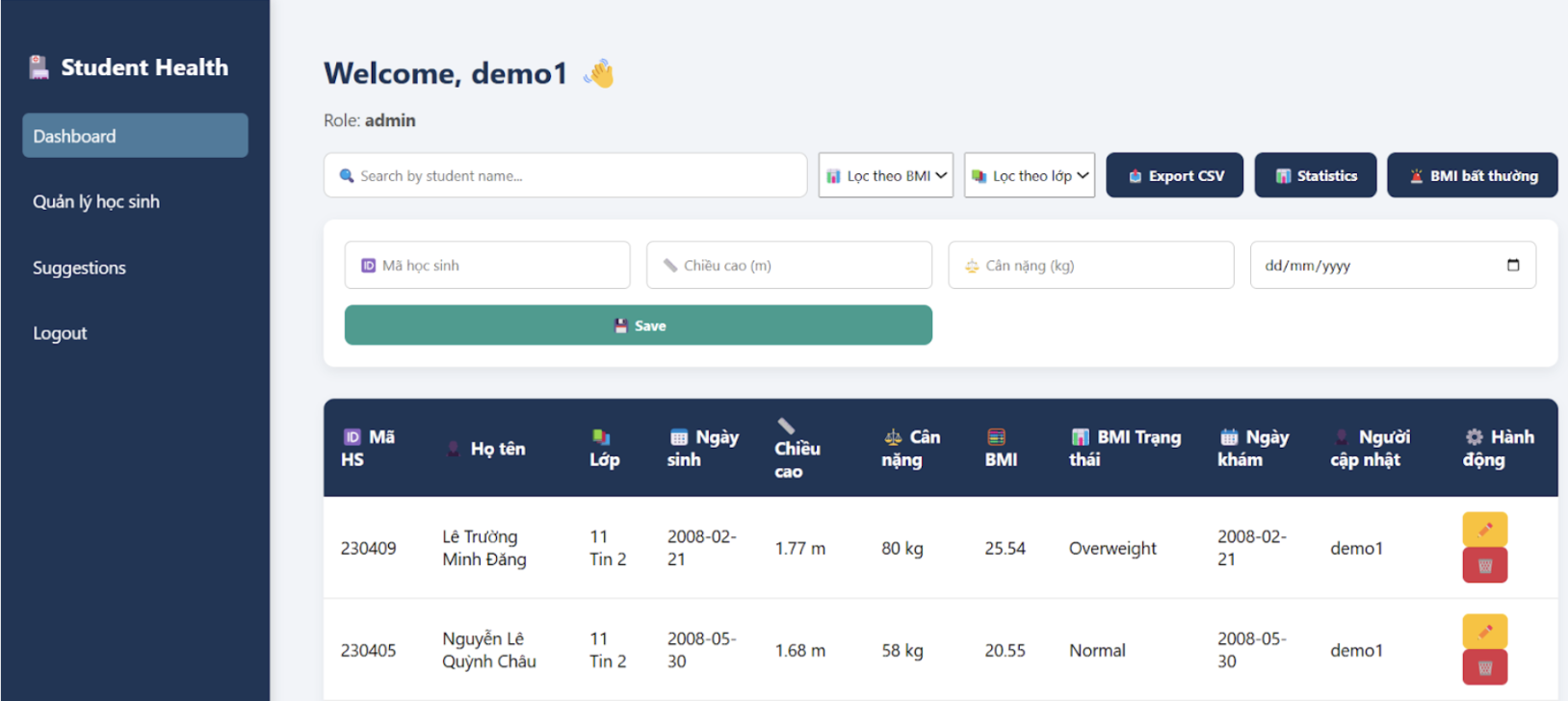

Overview: SHMS is a web-based platform designed to store, manage, and process student health data in secondary schools. It digitizes routine health check records (height, weight, BMI, examination date) and organizes them into a structured system so schools can track physical development continuously at both the individual and class/grade level.

The Problem: In many schools, health records are still handled manually or scattered across small files, which increases errors, slows reporting, and makes it difficult to detect trends or abnormalities early.

Solution: SHMS uses a lightweight setup (PHP + SQLite) that can run on a personal computer or a school internal network without complex server requirements. The system enforces role-based access (admin vs student) to protect privacy, and passwords are securely hashed.

Core Features:

System Design: The database is structured around student profiles, health records, edit history, and student-submitted suggestions, supporting one-to-many relationships for repeated checkups and change logs.

How to Run (Teacher-Friendly):

Install PHP (>= 7.4). Start a local server, open /init_db.php to generate the SQLite database file,

then access /login.html to register and use the system. No MySQL, Docker, or complex configuration required.